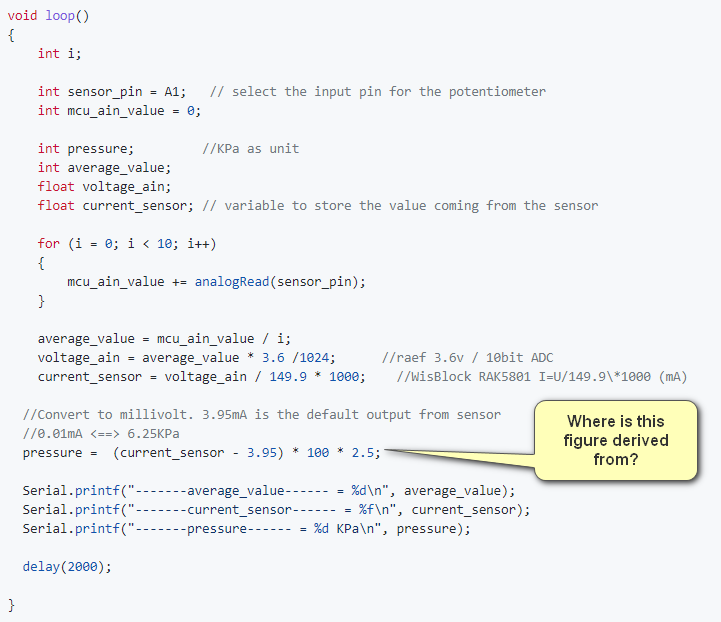

Hi everyone, I’m setting up a Pressure Transducer using the 5801. I am using a 0-3m sensor. Loaded the test sketch and works really well. To get the correct depth for my sensor (assuming it’s linear), I think I would get the sensor_range (3m), divide it by the current range (16), then multiply it by current_sensor. is this correct? I’m struggling to work out the “pressure” component of the example sketch…see screenshot below. Thanks in advance.

Hello @Andy

Thanks for finding a bug. This number makes no sense. We need to update our examples.

I do not know the sensor that was used when the example was written, so I asked our R&D team (they actually have this sensor).

For this specific pressure sensor (0 to 10 MPa) the correct formula would be

At 0 MPa, the output is 3.95mA (4mA)

Then each mA is a 0.625 MPa step. (10MPa/16mA) = 0.625 MPa/mA = 625 kPa/mA

So the pressure is calculated as (<measured current> - 3.95mA) * 625 to get the kPa.

The calculation in the sketch should be

pressure = (current_sensor - 3.95) * 625

For your application you need to calculate the steps (m) per mA

m_per_step = (3/16); // 0.1875

depth_measured = (measured_current - 4) * 0.1875;

This assumes that 0m depth is 4mA. You need to check with your sensor specs what the output at 0m actually is. In the pressure sensor from our example it was not 4mA but 3.95mA.